Matrix Factorization with Knowledge Graph Propagation for Unsupervised Spoken Language Understanding

- 1. Matrix Factorization with Knowledge Graph Propagation for Unsupervised Spoken Language Understanding Yun-Nung (Vivian) Chen William Yang Wang Anatole Gershman Alexander I. Rudnicky 1 Email: yvchen@cs.cmu.edu Website: http://vivianchen.idv.tw

- 2. OUTLINE Introduction Ontology Induction: Frame-Semantic Parsing Structure Learning: Knowledge Graph Propagation Spoken Language Understanding (SLU): Matrix Factorization Experiments Conclusions 2

- 3. OUTLINE Introduction Ontology Induction: Frame-Semantic Parsing Structure Learning: Knowledge Graph Propagation Spoken Language Understanding (SLU): Matrix Factorization Experiments Conclusions 3

- 4. A POPULAR ROBOT - BAYMAX Big Hero 6 -- Video content owned and licensed by Disney Entertainment, Marvel Entertainment, LLC, etc 4 Baymax is capable of maintaining a good spoken dialogue system and learning new knowledge for better understanding and interacting with people.

- 5. SPOKEN DIALOGUE SYSTEM (SDS) Spoken dialogue systems are the intelligent agents that are able to help users finish tasks more efficiently via speech interactions. Spoken dialogue systems are being incorporated into various devices (smart-phones, smart TVs, in-car navigating system, etc). Apple ’s Siri Microsoft ’s Cortana Amazo n’s Echo Samsung’s SMART TV Google Now https://www.apple.com/ios/siri/ http://www.windowsphone.com/en-us/how-to/wp8/cortana/meet-cortana http://www.xbox.com/en-US/ http://www.amazon.com/oc/echo/ http://www.samsung.com/us/experience/smart-tv/ https://www.google.com/landing/now/ Microsoft’s XBOX Kinect 5

- 6. CHALLENGES FOR SDS An SDS in a new domain requires 1) A hand-crafted domain ontology 2) Utterances labeled with semantic representations 3) An SLU component for mapping utterances into semantic representations With increasing spoken interactions, building domain ontologies and annotating utterances cost a lot so that the data does not scale up. The goal is to enable an SDS to automatically learn this knowledge so that open domain requests can be handled. 6

- 7. INTERACTION EXAMPLE find an inexpensive eating place for taiwanese food User Intelligent Agent Q: How does a dialogue system process this request? Inexpensive Taiwanese eating places include Din Tai Fung, etc. What do you want to choose? 7

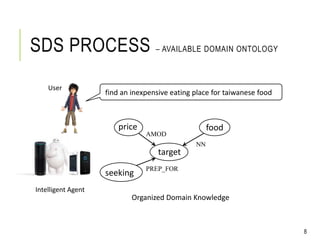

- 8. SDS PROCESS – AVAILABLE DOMAIN ONTOLOGY target foodprice AMOD NN seeking PREP_FOR Organized Domain Knowledge find an inexpensive eating place for taiwanese food Intelligent Agent 8 User

- 9. SDS PROCESS – AVAILABLE DOMAIN ONTOLOGY target foodprice AMOD NN seeking PREP_FOR Organized Domain Knowledge find an inexpensive eating place for taiwanese food Intelligent Agent 9 Ontology Induction (semantic slot) User

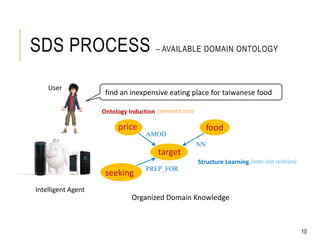

- 10. SDS PROCESS – AVAILABLE DOMAIN ONTOLOGY target foodprice AMOD NN seeking PREP_FOR Organized Domain Knowledge find an inexpensive eating place for taiwanese food User Intelligent Agent 10 Ontology Induction (semantic slot) Structure Learning (inter-slot relation)

- 11. SDS PROCESS – SPOKEN LANGUAGE UNDERSTANDING (SLU) target foodprice AMOD NN seeking PREP_FOR Organized Domain Knowledge find an inexpensive eating place for taiwanese food Intelligent Agent 11 seeking=“find” target=“eating place” price=“inexpensive” food=“taiwanese food” Spoken Language Understanding User

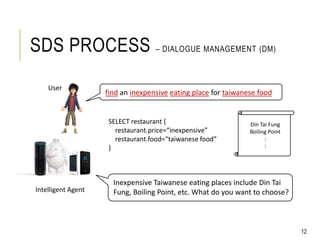

- 12. find an inexpensive eating place for taiwanese food SELECT restaurant { restaurant.price=“inexpensive” restaurant.food=“taiwanese food” } Din Tai Fung Boiling Point : : 12 SDS PROCESS – DIALOGUE MANAGEMENT (DM) Intelligent Agent User Inexpensive Taiwanese eating places include Din Tai Fung, Boiling Point, etc. What do you want to choose?

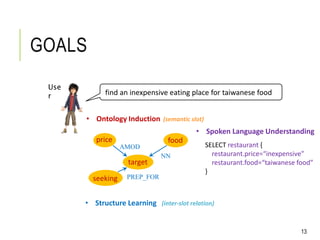

- 13. GOALS find an inexpensive eating place for taiwanese food Use r 13 target foodprice AMOD NN seeking PREP_FOR SELECT restaurant { restaurant.price=“inexpensive” restaurant.food=“taiwanese food” } • Ontology Induction (semantic slot) • Structure Learning (inter-slot relation) • Spoken Language Understanding

- 14. GOALS • Ontology Induction • Structure Learning • Spoken Language Understanding Knowledge Acquisition SLU Modeling find an inexpensive eating place for taiwanese food Use r 14

- 15. SPOKEN LANGUAGE UNDERSTANDING Input: user utterances Output: the domain-specific semantic concepts included in each utterance 15 SLU Model target=“restaurant” price=“cheap” “can I have a cheap restaurant”Ontology Induction Unlabeled Collection Semantic KG Frame-Semantic Parsing Fw Fs Feature Model Rw Rs Knowledge Graph Propagation Model Word Relation Model Lexical KG Slot Relation Model Structure Learning . Semantic KG SLU Modeling by Matrix Factorization Semantic Representation

- 16. OUTLINE Introduction Ontology Induction: Frame-Semantic Parsing Structure Learning: Knowledge Graph Propagation Spoken Language Understanding (SLU): Matrix Factorization Experiments Conclusions 16

- 17. PROBABILISTIC FRAME- SEMANTIC PARSING FrameNet [Baker et al., 1998] a linguistically semantic resource, based on the frame-semantics theory words/phrases can be represented as frames “low fat milk” “milk” evokes the “food” frame; “low fat” fills the descriptor frame element SEMAFOR [Das et al., 2014] a state-of-the-art frame-semantics parser, trained on manually annotated FrameNet sentences Baker et al., "The berkeley framenet project," in Proc. of International Conference on Computational linguistics, 1998. Das et al., " Frame-semantic parsing," in Proc. of Computational Linguistics, 2014. 17

- 18. FRAME-SEMANTIC PARSING FOR UTTERANCES can i have a cheap restaurant Frame: capability FT LU: can FE LU: i Frame: expensiveness FT LU: cheap Frame: locale by use FT/FE LU: restaurant 1st Issue: adapting generic frames to domain-specific settings for SDSs Good! Good! ? FT: Frame Target; FE: Frame Element; LU: Lexical Unit 18

- 19. SPOKEN LANGUAGE UNDERSTANDING 19 Input: user utterances Output: the domain-specific semantic concepts included in each utterance SLU Model target=“restaurant” price=“cheap” “can I have a cheap restaurant”Ontology Induction Unlabeled Collection Semantic KG Frame-Semantic Parsing Fw Fs Feature Model Rw Rs Knowledge Graph Propagation Model Word Relation Model Lexical KG Slot Relation Model Structure Learning . Semantic KG SLU Modeling by Matrix Factorization Semantic Representation Y.-N. Chen et al., "Matrix Factorization with Knowledge Graph Propagation for Unsupervised Spoken Language Understanding," in Proc. of ACL-IJCNLP, 2015.

- 20. OUTLINE Introduction Ontology Induction: Frame-Semantic Parsing Structure Learning: Knowledge Graph Propagation (for 1st issue) Spoken Language Understanding (SLU): Matrix Factorization Experiments Conclusions 20

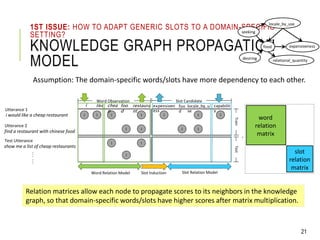

- 21. 1ST ISSUE: HOW TO ADAPT GENERIC SLOTS TO A DOMAIN-SPECIFIC SETTING? KNOWLEDGE GRAPH PROPAGATION MODEL Assumption: The domain-specific words/slots have more dependency to each other. Word Relation Model Slot Relation Model word relation matrix slot relation matrix ‧ 1 Word Observation Slot Candidate Train chea p restaura nt foo d expensiven ess 1 locale_by_u se 11 1 1 foo d 1 1 1 Test 1 1 Slot Induction Relation matrices allow each node to propagate scores to its neighbors in the knowledge graph, so that domain-specific words/slots have higher scores after matrix multiplication. i like 1 1 capabilit y 1 locale_by_use food expensiveness seeking relational_quantitydesiring Utterance 1 i would like a cheap restaurant …… find a restaurant with chinese food Utterance 2 show me a list of cheap restaurants Test Utterance 21

- 22. KNOWLEDGE GRAPH CONSTRUCTION 22 ccomp amo d dob j nsubj det Syntactic dependency parsing on utterances can i have a cheap restaurant capability expensiveness locale_by_use Word-based lexical knowledge graph Slot-based semantic knowledge graph restaurant can have i a cheap w w capability locale_by_use expensiveness s

- 23. KNOWLEDGE GRAPH CONSTRUCTION 23 Word-based lexical knowledge graph Slot-based semantic knowledge graph restaurant can have i a cheap w w capability locale_by_use expensiveness s The edge between a node pair is weighted as relation importance to propagate the scores via a relation matrix How to decide the weights to represent relation importance?

- 24. WEIGHT MEASUREMENT BY EMBEDDINGS Levy and Goldberg, " Dependency-Based Word Embeddings," in Proc. of ACL, 2014. 24 Dependency-based word embeddings Dependency-based slot embeddings can = [0.8 … 0.24] have = [0.3 … 0.21] : : expensiveness = [0.12 … 0.7] capability = [0.3 … 0.6] : : can i have a cheap restaurant ccomp amo d dob j nsubj det have acapability expensiveness locale_by_use ccomp amo d dob j nsubj det

- 25. WEIGHT MEASUREMENT BY EMBEDDINGS Compute edge weights to represent relation importance Slot-to-slot semantic relation 𝑅 𝑠 𝑆 : similarity between slot embeddings Slot-to-slot dependency relation 𝑅 𝑠 𝐷: dependency score between slot embeddings Word-to-word semantic relation 𝑅 𝑤 𝑆 : similarity between word embeddings Word-to-word dependency relation 𝑅 𝑤 𝐷 : dependency score between word embeddings 25 𝑅 𝑤 𝑆𝐷 = 𝑅 𝑤 𝑆 +𝑅 𝑤 𝐷 𝑅 𝑠 𝑆𝐷 = 𝑅 𝑠 𝑆 +𝑅 𝑠 𝐷 w1 w2 w3 w4 w5 w6 w7 s2 s1 s3 Y.-N. Chen et al., “Jointly Modeling Inter-Slot Relations by Random Walk on Knowledge Graphs for Unsupervised Spoken Language Understanding," in Proc. of NAACL, 2015.

- 26. KNOWLEDGE GRAPH PROPAGATION MODEL 26 Word Relation Model Slot Relation Model word relation matrix slot relation matrix ‧ 1 Word Observation Slot Candidate Train chea p restaura nt foo d expensiven ess 1 locale_by_u se 11 1 1 foo d 1 1 1 Test 1 1 Slot Induction 𝑅 𝑤 𝑆𝐷 𝑅 𝑠 𝑆𝐷

- 27. FEATURE MODEL 27 Ontology Induction SLU Fw Fs Structure Learning . 1 Utterance 1 i would like a cheap restaurant Word Observation Slot Candidate Train …… … chea p restaura nt foodexpensivene ss 1 locale_by_u se 11 find a restaurant with chinese food Utterance 2 1 1 foo d 1 1 1 Test 1 1 .97.90 .95.85 .93 .92.98.05 .05 Slot Induction show me a list of cheap restaurants Test Utterance hidden semantics 2nd Issue: unobserved hidden semantics may benefit understanding

- 28. OUTLINE Introduction Ontology Induction: Frame-Semantic Parsing Structure Learning: Knowledge Graph Propagation Spoken Language Understanding (SLU): Matrix Factorization (for 2nd issue) Experiments Conclusions 28

- 29. 2ND ISSUE: HOW TO LEARN IMPLICIT SEMANTICS? MATRIX FACTORIZATION (MF) 29 Reasoning with Matrix Factorization Word Relation Model Slot Relation Model word relation matrix slot relation matrix ‧ 1 Word Observation Slot Candidate Train chea p restaura nt foodexpensivene ss 1 locale_by_u se 11 1 1 foo d 1 1 1 Test 1 1 .97.90 .95.85 .93 .92.98.05 .05 Slot Induction MF method completes a partially-missing matrix based on a low-rank latent semantics assumption. 𝑅 𝑤 𝑆𝐷 𝑅 𝑠 𝑆𝐷

- 30. MATRIX FACTORIZATION (MF) The decomposed matrices represent low-rank latent semantics for utterances and words/slots respectively The product of two matrices fills the probability of hidden semantics 30 1 Word Observation Slot Candidate Train chea p restaura nt foodexpensivene ss 1 locale_by_us e 11 1 1 foo d 1 1 1 Test 1 1 .97.90 .95.85 .93 .92.98.05 .05 𝑼 𝑾 + 𝑺 ≈ 𝑼 × 𝒅 𝒅 × 𝑾 + 𝑺×

- 31. BAYESIAN PERSONALIZED RANKING FOR MF 31 Model implicit feedback not treat unobserved facts as negative samples (true or false) give observed facts higher scores than unobserved facts Objective: 1 𝑓+ 𝑓− 𝑓− The objective is to learn a set of well-ranked semantic slots per utterance. 𝑢 𝑥

- 32. 2ND ISSUE: HOW TO LEARN IMPLICIT SEMANTICS? MATRIX FACTORIZATION (MF) 32 Reasoning with Matrix Factorization Word Relation Model Slot Relation Model word relation matrix slot relation matrix ‧ 1 Word Observation Slot Candidate Train chea p restaura nt foodexpensivene ss 1 locale_by_u se 11 1 1 foo d 1 1 1 Test 1 1 .97.90 .95.85 .93 .92.98.05 .05 Slot Induction 𝑅 𝑤 𝑆𝐷 𝑅 𝑠 𝑆𝐷 MF method completes a partially-missing matrix based on a low-rank latent semantics assumption.

- 33. OUTLINE Introduction Ontology Induction: Frame-Semantic Parsing Structure Learning: Knowledge Graph Propagation Spoken Language Understanding (SLU): Matrix Factorization Experiments Conclusions 33

- 34. EXPERIMENTAL SETUP Dataset Cambridge University SLU corpus [Henderson, 2012] Restaurant recommendation in an in-car setting in Cambridge WER = 37% vocabulary size = 1868 2,166 dialogues 15,453 utterances dialogue slot: addr, area, food, name, phone, postcode, price range, task, type The mapping table between induced and reference slots Henderson et al., "Discriminative spoken language understanding using word confusion networks," in Proc. of SLT, 2012. 34

- 35. EXPERIMENT 1: QUALITY OF SEMANTICS ESTIMATION Metric: Mean Average Precision (MAP) of all estimated slot probabilities for each utterance 35 Approach ASR Manual w/o w/ Explicit w/o w/ Explicit Explicit Support Vector Machine 32.5 36.6 Multinomial Logistic Regression 34.0 38.8

- 36. 36 EXPERIMENT 1: QUALITY OF SEMANTICS ESTIMATION Metric: Mean Average Precision (MAP) of all estimated slot probabilities for each utterance Approach ASR Manual w/o w/ Explicit w/o w/ Explicit Explicit Support Vector Machine 32.5 36.6 Multinomial Logistic Regression 34.0 38.8 Implicit Baseline Random Majority MF Feature Model Feature Model + Knowledge Graph Propagation Modeling Implicit Semantics

- 37. 37 EXPERIMENT 1: QUALITY OF SEMANTICS ESTIMATION Metric: Mean Average Precision (MAP) of all estimated slot probabilities for each utterance Approach ASR Manual w/o w/ Explicit w/o w/ Explicit Explicit Support Vector Machine 32.5 36.6 Multinomial Logistic Regression 34.0 38.8 Implicit Baseline Random 3.4 2.6 Majority 15.4 16.4 MF Feature Model 24.2 22.6 Feature Model + Knowledge Graph Propagation 40.5* (+19.1%) 52.1* (+34.3%) Modeling Implicit Semantics

- 38. Approach ASR Manual w/o w/ Explicit w/o w/ Explicit Explicit Support Vector Machine 32.5 36.6 Multinomial Logistic Regression 34.0 38.8 Implicit Baseline Random 3.4 22.5 2.6 25.1 Majority 15.4 32.9 16.4 38.4 MF Feature Model 24.2 37.6* 22.6 45.3* Feature Model + Knowledge Graph Propagation 40.5* (+19.1%) 43.5* (+27.9%) 52.1* (+34.3%) 53.4* (+37.6%) Modeling Implicit Semantics The MF approach effectively models hidden semantics to improve SLU. Adding a knowledge graph propagation model further improves performance. 38 EXPERIMENT 1: QUALITY OF SEMANTICS ESTIMATION Metric: Mean Average Precision (MAP) of all estimated slot probabilities for each utterance

- 39. All types of relations are useful to infer hidden semantics. Approach ASR Manual Feature Model 37.6 45.3 Feature + Knowledge Graph Propagation Semantic 𝑅 𝑤 𝑆 0 0 𝑅 𝑠 𝑆 41.4* 51.6* Dependency 𝑅 𝑤 𝐷 0 0 𝑅 𝑠 𝐷 41.6* 49.0* Word 𝑅 𝑤 𝑆𝐷 0 0 0 39.2* 45.2 Slot 0 0 0 𝑅 𝑠 𝑆𝐷 42.1* 49.9* Both 𝑅w 𝑆𝐷 0 0 𝑅 𝑠 𝑆𝐷 EXPERIMENT 2: EFFECTIVENESS OF RELATIONS 39

- 40. Approach ASR Manual Feature Model 37.6 45.3 Feature + Knowledge Graph Propagation Semantic 𝑅 𝑤 𝑆 0 0 𝑅 𝑠 𝑆 41.4* 51.6* Dependency 𝑅 𝑤 𝐷 0 0 𝑅 𝑠 𝐷 41.6* 49.0* Word 𝑅 𝑤 𝑆𝐷 0 0 0 39.2* 45.2 Slot 0 0 0 𝑅 𝑠 𝑆𝐷 42.1* 49.9* Both 𝑅w 𝑆𝐷 0 0 𝑅 𝑠 𝑆𝐷 43.5* (+15.7%) 53.4* (+17.9%) Combining different relations further improves the performance. EXPERIMENT 2: EFFECTIVENESS OF RELATIONS 43 All types of relations are useful to infer hidden semantics.

- 41. OUTLINE Introduction Ontology Induction: Frame-Semantic Parsing Structure Learning: Knowledge Graph Propagation Spoken Language Understanding (SLU): Matrix Factorization Experiments Conclusions 41

- 42. CONCLUSIONS Ontology induction and knowledge graph construction enable systems to automatically acquire open domain knowledge. MF for SLU provides a principle model that is able to unify the automatically acquired knowledge adapt to a domain-specific setting and then allows systems to consider implicit semantics for better understanding. The work shows the feasibility and the potential of improving generalization, maintenance, efficiency, and scalability of SDSs. The proposed unsupervised SLU achieves 43% of MAP on ASR-transcribed conversations. 42

- 43. Q & A Thanks for your attentions!! 43

Editor's Notes

- #7: New domain requires 1) hand-crafted ontology 2) slot representations for utterances (labelled info) 3) parser for mapping utterances into; labelling cost is too high; so I want to automate this proces

- #8: How can find a restaurant

- #9: Domain knowledge representation (graph)

- #10: Domain knowledge representation (graph)

- #11: Domain knowledge representation (graph)

- #12: Domain knowledge representation (graph)

- #13: Map lexical unit into concepts

- #19: SEMAFOR outputs set of frames; hopefully includes all domain slots; pick some slots from SEMAFOR

- #23: What’s the weight for each edge?

- #24: What’s the weight for each edge?

- #26: Graph for all utterances

![PROBABILISTIC FRAME-

SEMANTIC PARSING

FrameNet [Baker et al., 1998]

a linguistically semantic resource, based on the frame-semantics

theory

words/phrases can be represented as frames

“low fat milk” “milk” evokes the “food” frame;

“low fat” fills the descriptor frame element

SEMAFOR [Das et al., 2014]

a state-of-the-art frame-semantics parser, trained on manually

annotated FrameNet sentences

Baker et al., "The berkeley framenet project," in Proc. of International Conference on Computational

linguistics, 1998.

Das et al., " Frame-semantic parsing," in Proc. of Computational Linguistics, 2014.

17](https://image.slidesharecdn.com/acl15matrixfactorizationslide-160704230106/85/Matrix-Factorization-with-Knowledge-Graph-Propagation-for-Unsupervised-Spoken-Language-Understanding-17-320.jpg)

![WEIGHT MEASUREMENT BY

EMBEDDINGS

Levy and Goldberg, " Dependency-Based Word Embeddings," in Proc. of

ACL, 2014.

24

Dependency-based word embeddings

Dependency-based slot embeddings

can = [0.8 … 0.24]

have = [0.3 … 0.21]

:

:

expensiveness = [0.12 … 0.7]

capability = [0.3 … 0.6]

:

:

can i have a cheap restaurant

ccomp

amo

d

dob

j

nsubj det

have acapability expensiveness locale_by_use

ccomp

amo

d

dob

j

nsubj det](https://image.slidesharecdn.com/acl15matrixfactorizationslide-160704230106/85/Matrix-Factorization-with-Knowledge-Graph-Propagation-for-Unsupervised-Spoken-Language-Understanding-24-320.jpg)

![EXPERIMENTAL SETUP

Dataset

Cambridge University SLU corpus [Henderson, 2012]

Restaurant recommendation in an in-car setting in Cambridge

WER = 37%

vocabulary size = 1868

2,166 dialogues

15,453 utterances

dialogue slot: addr, area, food, name,

phone, postcode, price range,

task, type

The mapping table between induced and reference slots

Henderson et al., "Discriminative spoken language understanding using word confusion

networks," in Proc. of SLT, 2012.

34](https://image.slidesharecdn.com/acl15matrixfactorizationslide-160704230106/85/Matrix-Factorization-with-Knowledge-Graph-Propagation-for-Unsupervised-Spoken-Language-Understanding-34-320.jpg)